Note that if \(X_i\) is the random variable defined by

\begin{equation*}

X_i(x)=\begin{cases}1 \amp \text{if the }i\text{-th entry of }x\text{ is }1 \\ 0 \amp \text{otherwise} \end{cases} \, ,

\end{equation*}

then we have that

\begin{equation*}

X=\sum_{i=1}^n X_i \, .

\end{equation*}

Observe that

\begin{equation*}

E[X_i]= \sum_{x\in \Bin_n} P(x)X_i(x) = \sum_{x\in \Bin_n: x_i=1} \frac{1}{2^n}\cdot 1 = \frac{2^{n-1}}{2^n} = \frac{1}{2} \, .

\end{equation*}

Equivalently, since \(X_i\) is an indicator random variable for the event that the \(i\)-th entry of \(x\) is \(1\text{,}\) and since half of all binary strings have a \(1\) in the \(i\)-th position, we have that \(P(X_i=1)=1/2\text{,}\) so

\begin{equation*}

E[X_i]=1\cdot P(X_i=1)+0\cdot P(X_i=0)=1/2\text{.}

\end{equation*}

Using linearity of expectation, we have that

\begin{align*}

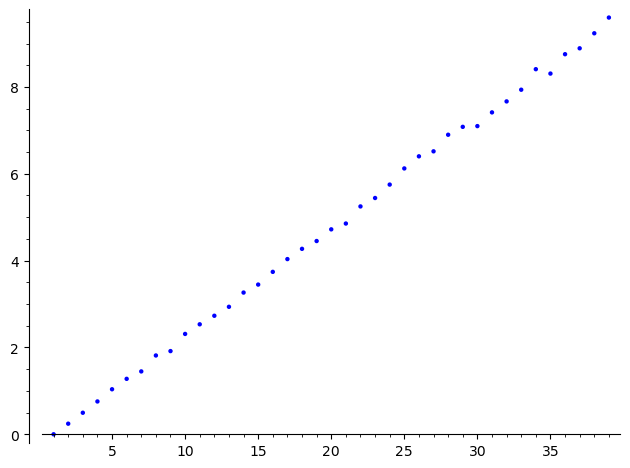

E[X] \amp = E\left[\sum_{i=1}^n X_i\right] \\

\amp = \sum_{i=1}^n E[X_i] \\

\amp = \sum_{i=1}^n \frac{1}{2} \\

\amp = \frac{n}{2} \, .

\end{align*}